Furnace Monitor and Control System

Python

Oracle

Docker

PyQt5

Overview

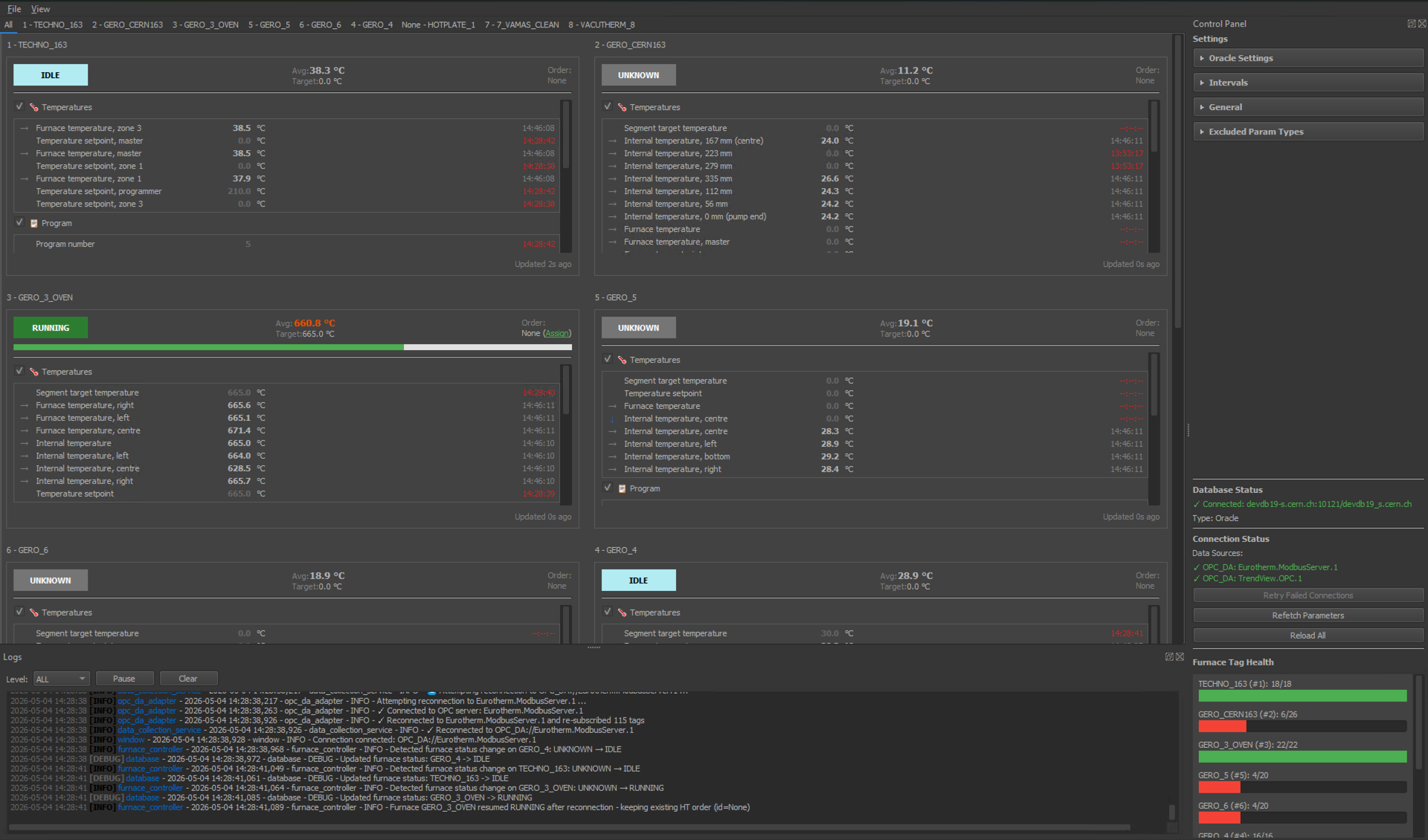

FMCS (Furnace Monitor and Control System) is a Windows desktop application I developed during my placement at CERN, deployed to automate the heat treatment workflow for superconducting magnet components. It acts as middleware between the CERN Oracle database and a bank of Eurotherm furnace controllers, eliminating the need for operators to interact directly with the furnace control systems.

The application retrieves heat treatment programs from Oracle, transfers them to controllers via S2KPE, collects real-time operational data over OPC DA, and syncs measurements back to the database for full traceability, running continuously as a background service.

System Architecture

FMCS follows a service-oriented architecture with a clear separation between data collection, state management, database synchronisation, and UI.

Oracle Database

│ ▲

│ │ HT programs in / measurements out

▼ │

FMCS Middleware (Python / PyQt5)

├── Data Collection Service ──OPC DA──► Eurotherm Controllers

├── Oracle Sync Service ──────────► Oracle (time-series writes)

├── HT Order Service ──S2KPE──► UIP program transfer

└── Furnace Controller / State Manager

Services

Data Collection Service runs on a dedicated thread and manages OPC DA connections to each furnace controller and recorder (Eurotherm, TrendView). It uses COM event-driven callbacks with pythoncom message pumping on the main thread for Windows COM compatibility.

Oracle Sync Service runs its own sync thread and implements an adaptive-interval strategy: faster polling during active heat treatments, slower when furnaces are idle. Each parameter carries its own log_interval_run and log_interval_idle configuration. To keep memory usage bounded, readings are aggregated between sync intervals, reducing the number of values to be stored significantly.n

HT Order Service monitors active heat treatment orders, automatically associating incoming readings with the correct order ID so every measurement is traceable back to the job it came from.

S2KPE Integration transfers compiled UIP heat treatment programs from the database to Eurotherm controllers by shelling out to S2KPE.exe with the correct arguments, abstracting the proprietary controller interface behind a clean Python wrapper.

Protocol Adapter Layer

The data layer uses a pluggable ProtocolAdapter interface. The current implementation is OPC DA (COM automation), with the architecture designed to accommodate OPC UA, Modbus, or other industrial protocols without changes to the service layer. Each adapter handles duplicate event filtering and quality code mapping (OPC quality 192 = GOOD).

State Machine

A FurnaceStateManager maintains each furnace's current status (IDLE, IDLE_HOT, RUNNING, ERROR, FAULT, etc.) and tracks program status transitions from Eurotherm's integer codes (1=RESET, 2=RUN, 4=HOLD, 8=HOLDBACK, 16=END) via a ProtocolMapper. State changes are emitted as Qt signals for the UI and sync service to react to.

Authentication

The application authenticates against the CERN Oracle database and uses CERN SSO (Keycloak OIDC) for user-facing login. The SSO implementation uses the OAuth 2.0 Device Authorization Grant flow, where the user opens a browser URL, authenticates via CERN's identity provider, and the desktop app polls for the token.

User Interface

The GUI is built with PyQt5 and styled with qtmodern.

- Tabbed furnace views — one tab per furnace, each showing live parameter readings, status, and the active HT order.

- System tray icons — per-furnace icons that show the furnace number and active order ID. Icons only appear for actionable states (

ERROR,FAULT,IDLE_HOT,RUNNING); clicking one navigates directly to that furnace's tab. - HT Program Editor — a visual editor for heat treatment profiles with a segment graph and plateau table, allowing operators to create, edit, and template programs before transferring them.

- Settings panel — collapsible sections for sync intervals, Oracle connection, database status, parameter types, and file paths.

- Live log viewer — docked log panel with real-time output from the application logger.

Packaging and Deployment

The application is packaged as a self-contained Windows installer built with Inno Setup and PyInstaller. The installer bundles the Oracle Instant Client for thick-mode DB connections, eliminating any dependency on a pre-installed Oracle client on the target machine. A CI/CD pipeline on CERN GitLab automates builds and publishes release artifacts.

Testing

The test suite uses pytest with a conftest.py fixture layer. Coverage spans the data models (Furnace, HTOrder), file adapters (Eurotherm T27/T35 format parsing), and the version script. The CI pipeline runs tests on every commit via .gitlab-ci.yml.

Key Outcomes

- Deployed and running in production in CERN Building 163

- Fully automated heat treatment workflow — no manual operator interaction with furnace controllers required

- Adaptive time-series aggregation keeps Oracle write load proportional to furnace activity

- Pluggable protocol adapter architecture ready for future OPC UA or Modbus expansion

- Self-contained Windows installer with bundled Oracle Instant Client for zero-dependency deployment

Reflections

Working within CERN's infrastructure brought constraints you don't encounter in typical software projects — COM automation on Windows, thick-mode Oracle connections, proprietary controller interfaces, and the need for rock-solid reliability in an environment where a failed heat treatment cycle has real material cost. It pushed me to think carefully about failure modes: what happens when the OPC server drops a connection mid-run, when Oracle is unreachable, or when the program transfer to the controller silently fails.

The adaptive sync interval design was one of the more interesting engineering decisions. Logging at full rate continuously would have generated noise and unnecessary database writes; logging too slowly would have lost resolution during the critical ramp phases of a heat treatment program. Tying the interval to furnace state gave us the best of both.

On this page